Deep reinforcement learning is a branch of machine learning that focuses on learning behaviours and has recently become a powerful framework for solving high-dimensional problem spaces.

The Arcade Learning Environment (1995) was one of the first breakthroughs of Deep Reinforcement Learning that used a Deep Q-Network to learn how to play a series of different Atari games effectively.

This project built upon this foundation by proposing a model that utilises more modern Reinforcement Learning algorithms, such as Double Deep Q-Learning and REINFORCE, while allowing user customisation of parameters.

Optimised agent playing Enduro

Optimised agent playing Enduro

Optimised agent playing Breakout

Optimised agent playing Breakout

Optimised agent playing PacMan

Optimised agent playing PacMan

The project software was organised to allow for advanced customisation of all parameters, which allowed the user to effectively examine the effects of altering different values on the agent's performance. Each playthrough

stored the specified parameters in JSON format, allowing the user to easily replay previous iterations and alter parameters. The project was written in Python and utilised complex PyTorch operations to achieve efficient results

Overall, the project was a success and you can run the agent for yourself by visiting my GitHub account.

Managing contacts has always been a challenge, especially for smaller companies who can't afford the large licensing fees required to run state-of-the-art contact management software.

Over the summer of 2022 I worked with a small company, IMS Publications, to completely overhaul their current system and instead host a bespoke website that handles every transaction between themselves and their clients.

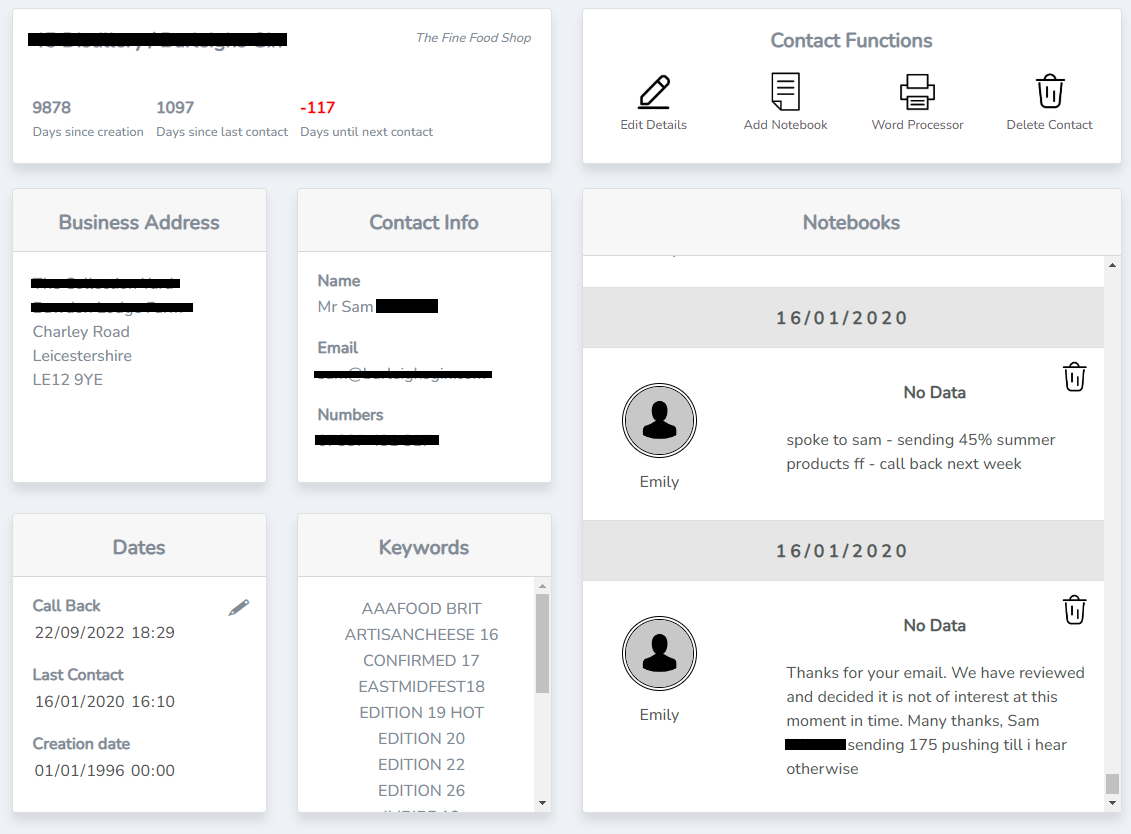

Example of the contact page on InTouch. Sensitive information has been removed.

Example of the contact page on InTouch. Sensitive information has been removed.

The website, called InTouch, has the following main functionalities:

- Users can search through over 65,000 companies through search fields or keywords

- New contacts can be added and validated with ease

- All activity is logged and can be viewed by team leaders; statistics automatically track the efficiency of each salesman

- Many other customised features are employed to ensure that InTouch suites the company needs exactly, allowing for fast and accurate handling of contacts.

The main challenge with installing a completely new system is data migration. For the past 26 years, an old system (Tracker v2.0) has been used,

which is so ancient that there is no documentation of it on the internet. Hence, obtaining any access to the database via inspecting the database files were completely out of the question;

all files were written in a format that is 25 years deprecated. To solve this issue, I developed an advanced autoclicker software that visually analysed every record on the

database and automatically generated a new record on the new InTouch system. Over 65,000 records were successfully transferred onto the new system.

For my 4th year group project, we decided to tackled the issue with new students being overwhelmed during their initial experience at the Universities, more specifically the University of Leeds which this application was designed around.

This project presented an innovative Chatbot: combining IBM technologies, Artificial Intelligence and Augmented Reality into one Unity mobile application.

The application will instantly answer the University-related requests of new students, guide them to their lecture halls in augmented reality, recommend societies most suited to them and more.

These features are presented through an interactive virtual avatar, also available in augmented reality. This application also offers a plethora of accessibility features, from language translation, speech-to-text and colour-blind modes.

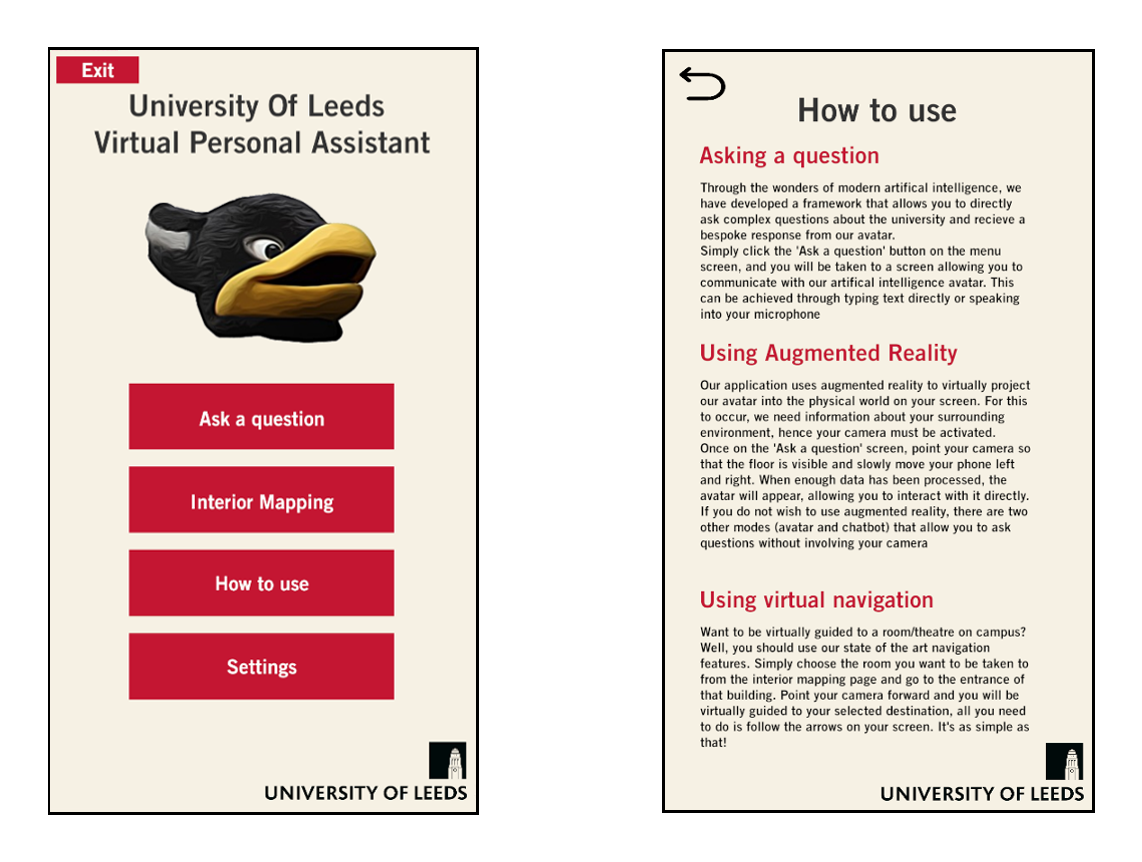

The user interface theme used for the application. Left is the home page, right is the info page

The user interface theme used for the application. Left is the home page, right is the info page

My main contributions to the project were handling all unity application details, user interactions and integration of different technologies. The user was able to interface with the chatbot in several different ways:

- Augmented reality- an avatar was superimposed onto the camera view, where the user could ask questions via keyboard input or speed-to-text, and the avatar would respond with a response inside a speech bubble as well as a text-to-speech response.

The avatar had a variety of different animations which accompanied each interaction with the user; it was even possible to make it dance.

- Unity 3D environment- if augmented reality was not an option, the user could interact with the avatar in a fake environment in Unity. This environment weather was also dependant on the current location of the user; if it was snowing, ground would have a snow texture and

the clouds in the environment would be producing snow particles. This function included: wind speed, cloud density and air moisture (mist)

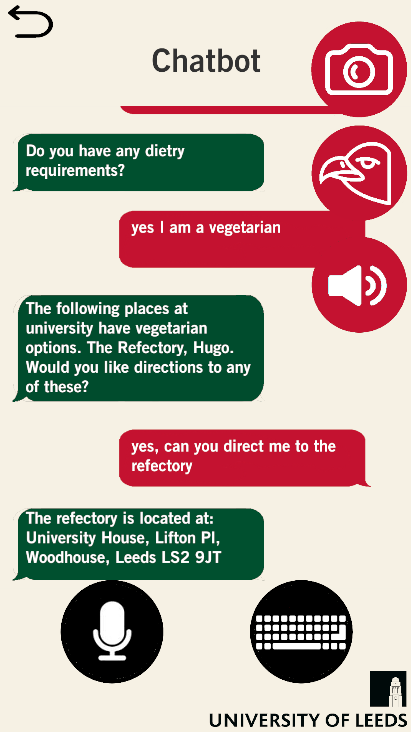

- Simple texting interface- the user could also use a simple text-message environment to interact with the chatbot, which was aimed to users who were less in touch with technology or wanted a quick response.

Augmented reality scene of the application

Augmented reality scene of the application

Avatar scene of the application

Avatar scene of the application

Simple text scene of the application

Simple text scene of the application

I was also responsible for handling generating all user interface scenes, language translation, user settings, message passing and integration of all the different APIs used in the project.

Overall, the project was successful, and the showcase video can be found here

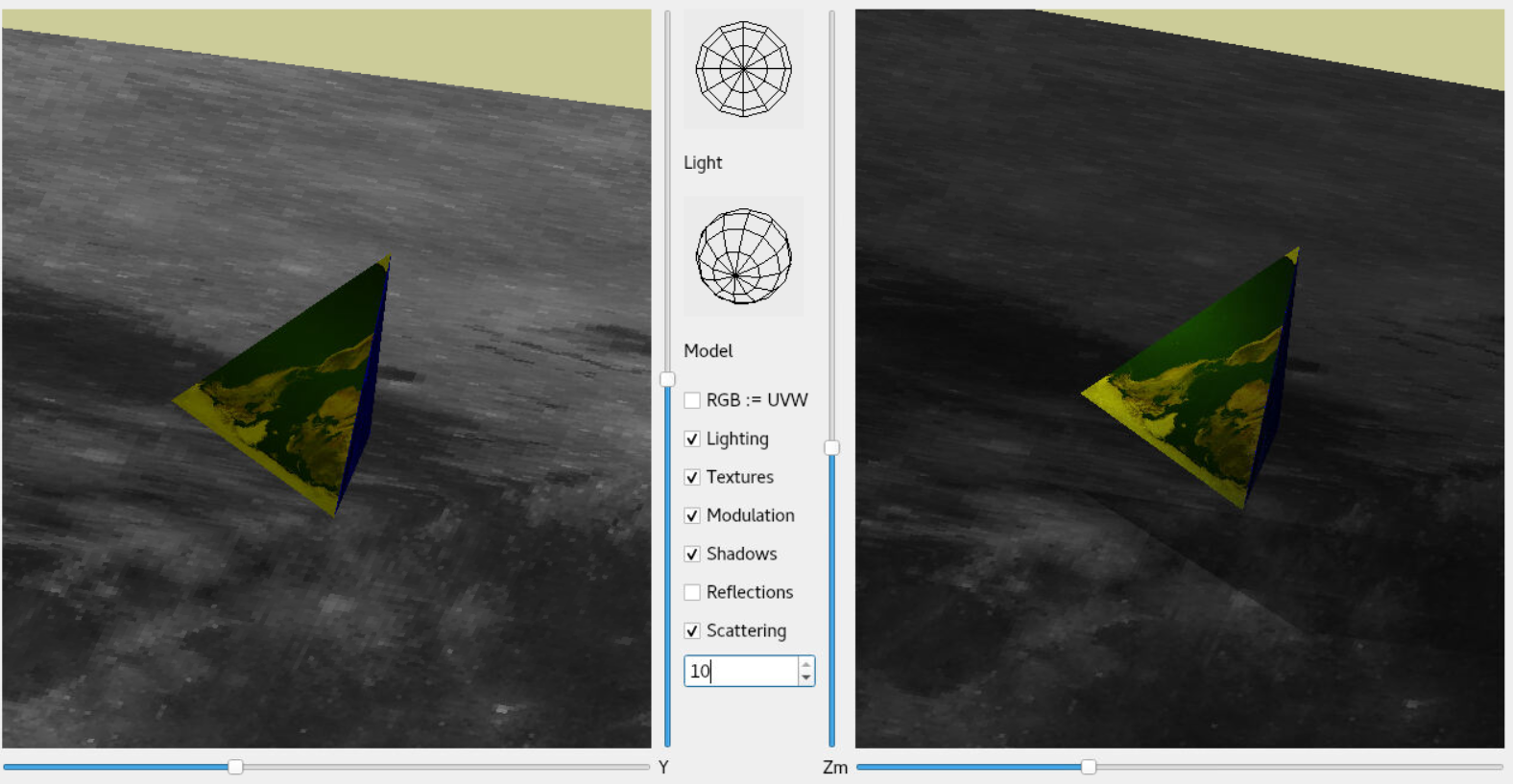

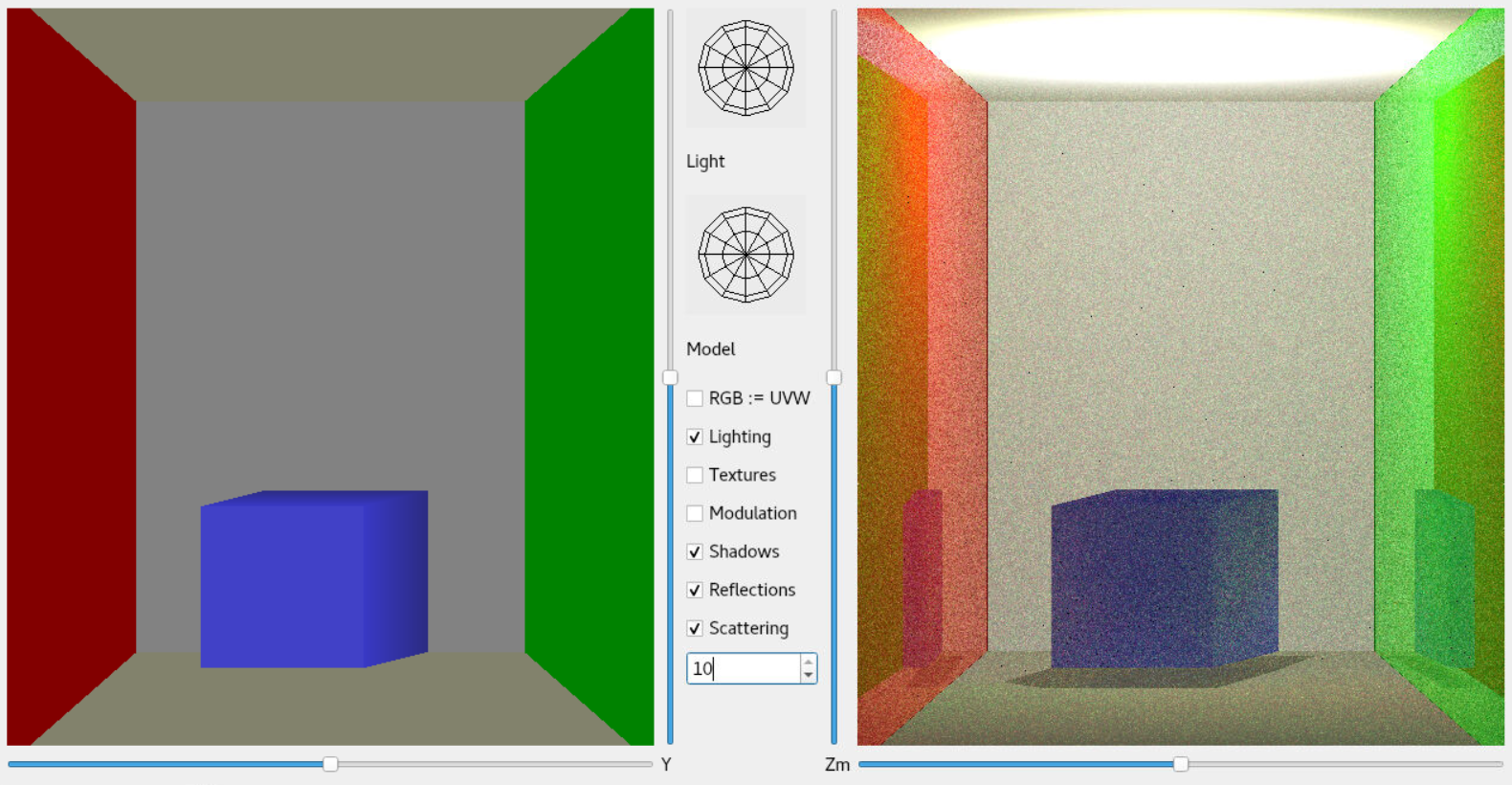

One project I am particularly proud of was my 4th year Fundamentals of Modelling and Rendering coursework which involved creating a Monte-Carlo recursive raytracer from complete scratch in pure C++. The raytracer had

to be powerful enough to calculate: Blinn-Phong lighting, textures, shadows, reflections and refractions.

A triangle with an earth texture suspended above a plane with a moon texture. Left image is OpenGL, right image is the raytracer.

A triangle with an earth texture suspended above a plane with a moon texture. Left image is OpenGL, right image is the raytracer.

The user would load in a model and the raytracer would calculate the colour of every pixel

by shooting a ray into the environment depending on the current rotation and position of the virtual camera. If the ray interacted with an object, it would calculate the colour values of the surface (with Blinn-Phong light and texturing) and would also shoot off more rays into the environment from the surface.

The direction of each the new ray was calculated using the Monte-Carlo method. Once a max threshold of bounces were reached, all the colour values were summed together by recursively tracing every ray and multiplying by its impact on the final colour.

The final result was very interesting and the raytracer created much better images than simple OpenGL.

The famous Cornell Box with left and right reflective walls. Left image is OpenGL, right image is the raytracer.

The famous Cornell Box with left and right reflective walls. Left image is OpenGL, right image is the raytracer.